Magnific AI, a top choice among modern upscalers for digital content creators, recently faced wide criticism due to a recurring error message: “Enhancement process failed.” Artists and developers who relied on the tool experienced interruptions in workflow, leading to questions about reliability and internal architecture. This article dives deep into why this error occurs and how a lesser-known method — interpolation bypass — unexpectedly restored sharp textures where AI failed.

TL;DR

Magnific AI recently struggled with failed upscaling attempts, often showing an “enhancement process failed” error. Investigations revealed flawed assumptions in the tool’s AI model relating to texture recognition and server load. Interestingly, a manual workaround known as the interpolation bypass method succeeded in retaining fine details and textures. This technique is now gaining traction as a stopgap or even preferred option under certain conditions.

Understanding the “Enhancement Process Failed” Error

When an AI-based service fails to deliver, understanding the root cause is essential. Users of Magnific AI reported sudden halts in image processing, with the software providing little explanation other than the vague message: “Enhancement process failed.” Field testing and user experience pointed to multiple layers of possible failure.

Underlying Reasons

- Texture misclassification: The AI model fails to distinguish between subtle texture variations in the source image, mistaking them for noise.

- Overloaded servers: During peak times, cloud-based computation servers silently timed out, causing errors without clear user feedback.

- Failed progressive scaling: Magnific AI relies on multiple stages of resolution enhancement. If any stage fails, the entire process collapses.

- Strict content filters: AI safety filters occasionally flagged unproblematic content as potential violations, stopping processing entirely.

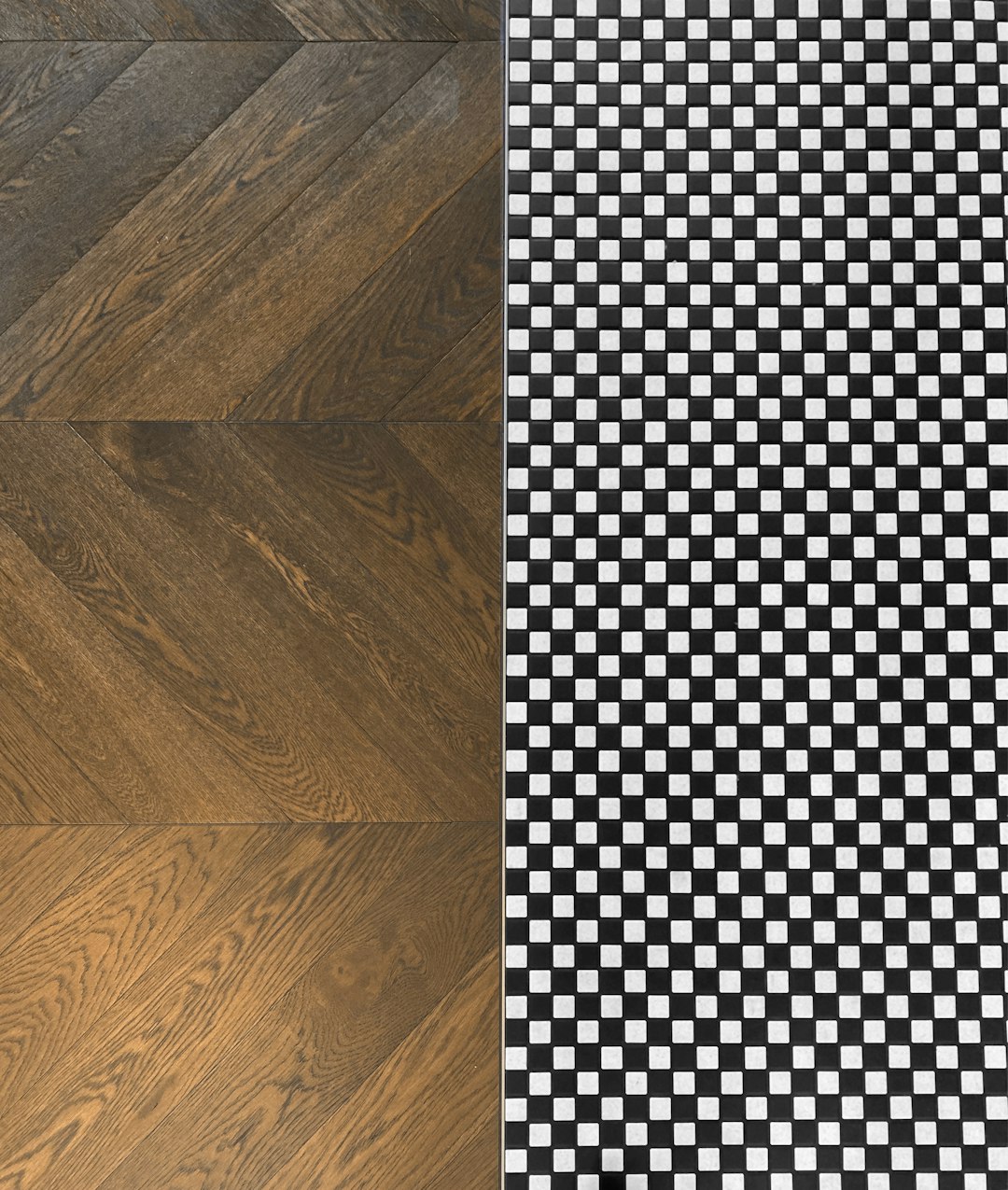

These technical and logistical issues combined to undermine reliability. Of specific importance is the software’s handling of natural and artificial textures — particularly cloth fibers, foliage, and architectural lines, which often came out either blurred or algorithmically smeared.

The Role of Textural Analysis and AI Shortcomings

Magnific AI’s draw has always been its advanced deep learning architecture that identifies edge patterns and reconstructs missing details. However, its dependence on learned datasets becomes its Achilles heel when presented with images that have unlearned or obscure textural patterns.

For example, a hand-woven carpet from a non-Western region may include patterns the algorithm has never processed, resulting in unsatisfactory output. Similarly, vintage photos and digitally altered images (e.g., from game engines or generative models) often trigger misalignment in the enhancement process.

Evidence of AI Texture Misinterpretation

Post-failure images showed some striking characteristics:

- Smeared fine lines on faces and cloth

- Halo effects around high-contrast edges

- Loss of micro-details, especially in highlights and shadow regions

This led many digital artists to seek alternative upscaling workflows or rebuild their pipelines to accommodate the shortcomings of the AI-based tool.

Why Traditional Methods Still Matter: Enter Interpolation Bypass

It was in an obscure Reddit thread where a user first mentioned bypassing the Magnific AI engine entirely using what became labeled as the interpolation bypass technique. The idea was deceptively simple: use traditional interpolation (e.g., Lanczos, Bicubic) to resize an image, followed by selective texture enhancement using smaller, more controllable models or filters.

Unlike AI-centric workflows that attempt to “guess” texture details, interpolation methods preserve the exact pixel fidelity during scaling. The bypass approach restored control back to the user while minimizing artifact introduction.

Key Advantages of the Interpolation Bypass

- Predictability: No black-box processing means users know exactly what happens at each step.

- Fewer server dependencies: Everything can be processed locally, free from server lag or downtime.

- Layered enhancement: Allows for stack-based sharpening and color correction based on user input, not algorithmic assumption.

How the Interpolation Workflow Works Step-by-Step

For those new to image processing, applying the interpolation bypass is simple enough with a combination of open-source tools. Below is a basic step-by-step implementation strategy:

- Resize the image using interpolation: Use software like GIMP, Photoshop, or ImageMagick to upscale using Bicubic or Lanczos methods.

- Apply manual texture enhancement: Select portions of the image (e.g., eyes, fabric) and apply edge-preserving filters or local contrast enhancement.

- Optional: Sub-pixel enhancement using GAN patches: Insert model-based textures manually via patching scripts or specialized AI plugins like ESRGAN patches.

- Final sharpening and tone correction: Final pass through sharpening tools and exposure correction to preserve artistic intent.

This has proven shockingly effective in restoring image clarity that even Magnific AI, under ideal circumstances, struggled to handle accurately.

Community Feedback and Adoption

The response from professionals has been mixed but generally positive. Designers, particularly in gaming and digital art communities, have reported significant improvement in visual fidelity using the interpolation bypass method. Though requiring more manual input, the reduced error rate and consistent behavior are appreciated.

On platforms like GitHub and Behance, several new forks and plug-ins are emerging that center around this hybrid model — interpolation first, intelligence second. It is not seen as a replacement for AI upscaling yet, but rather a parallel approach grounded in reliability.

Critics rightly note that this method requires training and a creative eye, unlike Magnific AI’s streamlined button-click approach. However, in professional environments where output quality is paramount, predictability often trumps automation.

Conclusion

Magnific AI’s failure to consistently deliver high-quality results, especially under load or when processing complex textures, has highlighted the fragility of modern AI’s dependence on trained datasets and centralized compute resources. While the technology remains impressive, it is not yet foolproof.

The interpolation bypass method offers a pragmatic, controllable alternative that delivers sharp textures and consistency. It’s a timely reminder that sometimes, traditional methods — blended with modern tooling — can provide reliable results where purely AI-based approaches stumble.

As the field of image enhancement matures, hybrid workflows that balance algorithmic intelligence with manual craftsmanship may well define the next era in visual production.