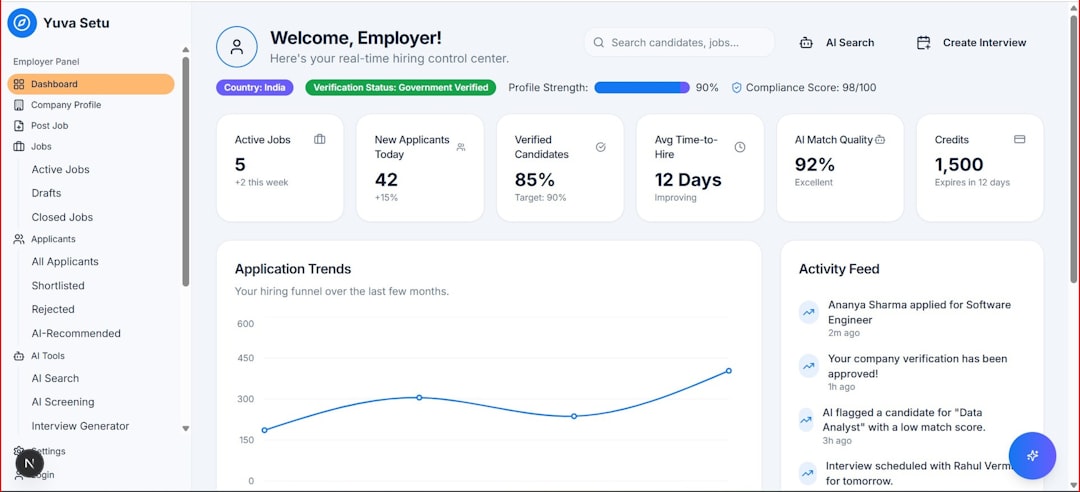

Large language models (LLMs) are incredibly powerful, but their outputs are only as good as the context they receive. As organizations increasingly rely on LLMs for content generation, coding, research, and customer support, the need for precise, structured, and dynamic context has become critical. This is where context engineering platforms come into play—tools designed to optimize prompts, manage knowledge sources, and improve the overall reliability of AI responses.

TLDR: Context engineering platforms help teams structure, enrich, and manage the information fed into large language models. By improving prompt design, retrieval systems, and memory management, these tools significantly enhance output accuracy and consistency. Platforms like LangChain, LlamaIndex, Humanloop, Dust, Vellum, and PromptLayer offer different approaches to improving LLM results. Choosing the right one depends on whether the focus is evaluation, orchestration, experimentation, or knowledge integration.

Below are six context engineering platforms that help businesses and developers get better, more reliable results from LLMs.

1. LangChain

LangChain is one of the most recognized frameworks for context engineering. It enables developers to build applications powered by LLMs using modular “chains” that connect prompts, tools, memory, and external data sources.

At its core, LangChain helps manage:

- Prompt templates for consistent formatting

- Memory layers to maintain conversation state

- Retrieval-augmented generation (RAG) pipelines

- Tool usage and agent workflows

This level of orchestration ensures that LLMs are not operating on isolated inputs but instead receive structured, relevant context pulled from databases, APIs, and documents.

Image not found in postmetaLangChain is especially useful for teams building complex AI systems such as chatbots that need persistent memory or research tools that summarize internal documentation. By separating logic from raw prompts, it reduces hallucinations and improves output reliability.

Best for: Developers building custom AI workflows and RAG systems.

2. LlamaIndex

LlamaIndex focuses specifically on connecting LLMs to external data sources. While many platforms provide orchestration, LlamaIndex specializes in structured data ingestion and retrieval optimization.

It allows organizations to index:

- PDFs and documents

- Databases

- SaaS applications

- Internal company knowledge bases

Instead of dumping large amounts of raw data into prompts, LlamaIndex structures and retrieves only what is relevant to a query. This targeted retrieval significantly improves accuracy and reduces token waste.

Its advanced features include:

- Multi-step query engines

- Context compression

- Metadata filtering

- Hybrid search techniques

For businesses managing large document repositories, LlamaIndex ensures that LLM outputs are grounded in trustworthy internal data rather than general training information.

Best for: Organizations implementing knowledge-based AI systems.

3. Humanloop

Humanloop focuses on evaluation and iterative improvement of LLM systems. Context engineering is not just about prompt design—it is also about testing, feedback, and measurable improvement.

Humanloop allows teams to:

- Create structured prompt experiments

- Run A/B tests on different prompt versions

- Collect human feedback

- Evaluate outputs against benchmarks

This platform bridges the gap between development and production. Instead of guessing which prompt works best, teams can analyze performance data and refine their context strategies systematically.

For enterprises deploying AI into customer-facing applications, this evaluative layer is critical to maintaining high-quality outputs and minimizing reputational risks.

Best for: Teams that require controlled experimentation and measurable AI improvement.

4. Dust

Dust is designed to help companies create AI-powered internal assistants by integrating LLMs directly with workplace tools. It places heavy emphasis on contextual grounding from internal systems.

With Dust, organizations can connect:

- Notion workspaces

- Slack conversations

- Google Drive documents

- Internal APIs

This integration ensures that responses are based on real company knowledge rather than generic internet data. Employees can query internal documentation, summarize reports, or automate repetitive tasks with improved contextual awareness.

Dust also emphasizes security and access controls, ensuring that sensitive context is only accessible to authorized users. This makes it particularly appealing for medium-to-large enterprises.

Best for: Companies building secure internal AI assistants.

5. Vellum

Vellum focuses on prompt management, experimentation, and deployment. It offers a structured interface where teams can design prompts, test variations, and monitor production performance.

One of the biggest challenges in context engineering is version control. Prompts often change over time, and without proper tracking, teams lose visibility into which versions perform best. Vellum addresses this by:

- Providing prompt versioning

- Enabling side-by-side comparisons

- Tracking historical performance metrics

- Simplifying deployment updates

By treating prompts like code, Vellum introduces discipline into LLM development workflows. This structured approach improves reproducibility and reduces unexpected output degradation when scaling AI systems.

Best for: Product teams managing prompts in production environments.

6. PromptLayer

PromptLayer acts as a monitoring and logging tool for LLM usage. It captures every prompt and response interaction, making debugging and optimization much easier.

Its primary value lies in:

- Request logging

- Response tracking

- Metadata tagging

- Workflow visibility

When outputs do not meet expectations, teams can trace exactly what context was provided and identify where adjustments are needed. This transparency is crucial for organizations working with high-stakes AI applications.

While it does not replace orchestration frameworks, PromptLayer complements them by adding an observability layer to LLM systems.

Best for: Teams prioritizing debugging, monitoring, and transparency.

How Context Engineering Platforms Improve LLM Outputs

The common thread across all these platforms is improved context management. They address core LLM challenges such as:

- Hallucinations caused by insufficient grounding

- Inconsistent outputs from poorly structured prompts

- Token inefficiency due to irrelevant context

- Lack of evaluation in production systems

By introducing structured retrieval, experimentation pipelines, memory handling, and monitoring systems, context engineering platforms transform LLM usage from trial-and-error prompting into a disciplined engineering practice.

This shift is especially important as AI moves from experimentation into mission-critical workflows. Customer support bots, legal document analysis tools, and financial research agents require predictable, accurate, and auditable outputs—something ad hoc prompts cannot reliably provide.

Choosing the Right Platform

Selecting the best context engineering platform depends on organizational goals:

- If building complex AI workflows → LangChain

- If focusing on document indexing and RAG → LlamaIndex

- If prioritizing evaluation and testing → Humanloop

- If deploying internal enterprise assistants → Dust

- If managing prompt lifecycle and deployment → Vellum

- If emphasizing monitoring and logging → PromptLayer

Many teams combine multiple tools to create a comprehensive AI infrastructure. For example, a company might use LlamaIndex for retrieval, LangChain for orchestration, and PromptLayer for monitoring.

Ultimately, context engineering is becoming a cornerstone of responsible AI deployment. As the complexity of LLM applications grows, these platforms provide the structure necessary to harness AI effectively while minimizing risks.

Frequently Asked Questions (FAQ)

1. What is context engineering in LLMs?

Context engineering refers to the systematic design, structuring, and management of the information provided to a large language model. It includes prompt design, retrieval pipelines, memory systems, evaluation frameworks, and monitoring tools.

2. Why is context important for LLM outputs?

LLMs generate responses based entirely on the input context they receive. Well-structured and relevant context improves accuracy, coherence, and reliability, while poorly designed context increases hallucinations and inconsistencies.

3. Are context engineering platforms only for developers?

No. While some tools are developer-focused, many platforms offer user-friendly interfaces that allow product managers, analysts, and operations teams to experiment with and refine AI systems.

4. Can multiple platforms be used together?

Yes. Many organizations combine orchestration frameworks, indexing tools, and monitoring platforms to create a complete LLM infrastructure.

5. Do these platforms reduce hallucinations completely?

They significantly reduce hallucinations by grounding outputs in structured context and enabling evaluation. However, no platform can eliminate hallucinations entirely; careful engineering and oversight remain essential.

6. Is context engineering necessary for small projects?

For simple use cases, basic prompt design may be sufficient. However, as soon as AI is deployed in production or connected to proprietary knowledge, structured context engineering becomes highly beneficial.